April 30, 20265 min read

How a one-off helper script for one client’s VPS turned into the deployment toolkit I now use across every project, and why I made it agent-first along the way.

I had a client who needed to manage a handful of VPSes. Nothing fancy. A few Docker Compose stacks, some Postgres databases, the usual. The work was all the same shape: SSH in, pull the latest code, run migrations, restart containers, hope nothing broke, check the logs. So I did what anyone would do. I wrote a helper script.

That script was about 80 lines of bash. It deployed one stack to one VPS. I committed it to the project repo, named it deploy.sh, and forgot about it for a few weeks.

Then the client added another stack. And another VPS. And needed staging. And wanted nightly database backups. And asked if there was a way to rotate the GitHub deploy key without manually editing four .env files. The script grew. Then it sprawled. Then it became unmaintainable, so I rewrote it. Then the rewrite started sprawling too.

At some point I stopped fighting it and pulled the whole thing out into its own project. That project is Strut.

The thing about managing VPSes is that the work is too simple to justify Kubernetes and too repetitive to do by hand. Every team I’ve worked with that landed somewhere in that gap ended up with one of two things:

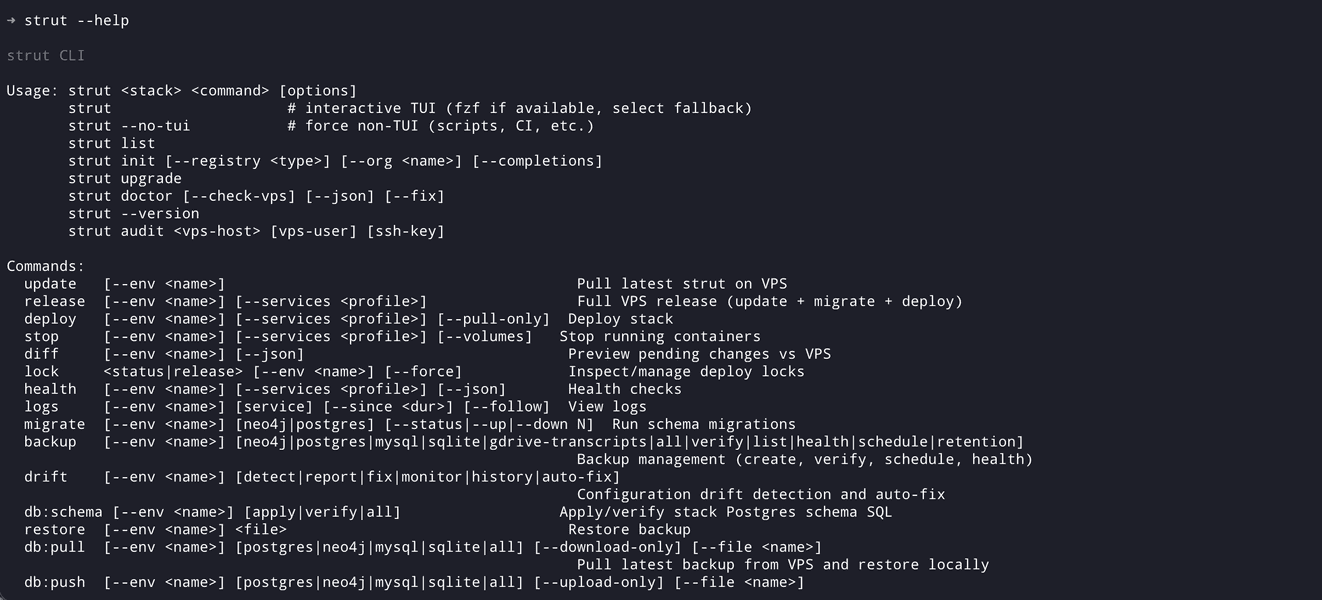

I wanted something that lived in the middle. Real workflow primitives like deploy, release, backup, restore, drift detection, secret rotation, and dry-runs, but built around the simplest possible transport layer: SSH and Docker. No daemons, no control planes, no proprietary agents. If the box has SSH and Docker, the tool should work.

That constraint shaped almost every design decision in Strut. The whole engine is bash, because bash is what’s on the other end of an SSH connection no matter what Linux distro you’re shipping to. Configuration is text files in the project repo, because text files diff well and survive being copy-pasted into a Slack message at 2am. Health checks read from a config file rather than being baked into the engine, because every stack has a different idea of what “healthy” means.

The piece I’m proudest of is the architecture, which I documented in detail in the Architecture wiki page. There are two trees:

~/.strut/ is the engine: the CLI entrypoint, the shell library modules, the templates. Installed once, shared across every project.strut.conf, your stack definitions in stacks/, and your environment files (.prod.env, .staging.env). This is the config.The engine has zero hardcoded values. No service names, no port numbers, no organization slugs. Everything that varies between projects lives in the config tree. The result is that I can strut upgrade the engine across all my machines without touching a single project, and I can scaffold a new project with strut init without thinking about what the engine looks like.

This split sounds obvious in retrospect, but it took me three rewrites to get there. The early versions had service-specific logic mixed in with the engine and it made every new client project a fork. Pulling the config out into a separate tree was the unlock that let Strut go from “the script I use for one client” to “the tool I use for everything.”

Somewhere around v0.10, the way I was working changed. I’d started using Claude Code, Cursor, and other agentic tooling for most of my day-to-day development. Less typing, more describing. And it hit me that deployment commands were a perfect fit for an agent. They’re high-context (you need to know what’s deployed, what env you’re on, what the failure modes are) and low-creativity (the actual sequence of steps is mechanical). That’s exactly the kind of work I’d rather hand off.

The problem was that no agent could just run strut my-app release --env prod usefully. It didn’t know which stack to pick. It didn’t know what env to target. It didn’t know to check drift before releasing. It didn’t know that on this particular project, you always run backup postgres before release, but on this other one you don’t because the database is managed elsewhere.

So I built two things into Strut to fix that. The first is agent steering: prompt files that live in the project and teach an agent how to use Strut for that specific stack. The second is a skills library: pre-built skills for deploy, backup, rollback, scale, logs, and a handful of other common operations. Drop them into your agent’s config and the agent suddenly knows how to operate your infrastructure.

The result is the workflow I actually wanted. I describe what I want in plain English: “release the API stack to staging, but pull a fresh prod backup down first so I can test against real data.” The agent reads strut.conf, sees which stacks exist, picks the right env, runs the right sequence with --dry-run first, shows me the plan, and runs it. I get to spend my attention on the part that needs judgment, not the part that’s just typing.

This is the bit I’m most excited about, and it’s also the part of the project where I think the most interesting work is still ahead. The skills library is small right now. The steering files cover the common cases. But the surface area for “things an agent could do well with the right context” is enormous, and Strut’s config-driven design means that surface area scales naturally as the tool grows.

The tagline on the site is “Deploy Docker stacks. No drama.” What that means in practice is the standard release workflow looks like this:

strut my-app release --env prodThat single command syncs the repo on the VPS, runs migrations, deploys the containers, and verifies health checks. If anything fails, it tells you exactly where, and the database is already backed up before any of this starts.

The other commands I reach for most:

strut my-app drift --env prod # what's different from local?

strut my-app db:pull --env prod # pull prod db down to local

strut my-app keys db:rotate postgres --env prod # rotate db credentials

strut my-app logs api --follow --env prod # tail logs from one service

strut my-app health --env prod --json # health check, machine-readableEvery destructive command supports --dry-run, and that’s not a feature I added late; it’s been baked in from the rewrites. When you’re managing client infrastructure at 11pm because something broke, the difference between “see what would happen” and “do it” is the difference between sleep and a war story.

A few things stand out from the journey from deploy.sh to v0.20.

Configuration is a feature, not a tax. The engine is small and the configs are explicit. That sounds like more work upfront, and it is, but it pays back the first time you onboard a new project and realize it’s just a strut init away from working the same as everything else.

Bash holds up better than I expected. The engine is 99.7% Bash. I’d written enough Bash earlier in my career to know its quirks (and to write tests in Bats), but I was braced for it to feel limiting. It mostly didn’t. The few places it does, I’ve found I can work around with disciplined module structure rather than reaching for a different language.

Dry-runs are a non-negotiable. Every meaningful command supports --dry-run. Adding this to a destructive operation later is a pain. Adding it from the start is almost free. Strut had a few rough edges before I made dry-run universal, and zero rough edges since.

Building agent-first changes what you build. If I’d written Strut without thinking about agents, the CLI surface would look different. There would be more hidden state, more shortcuts, more “magic” defaults that a human gets used to but an agent has to guess at. Designing for an agent forced me to make the tool more predictable and more legible, which turned out to be better for humans too.

Strut is still pre-1.0 (latest is v0.20.1). It’s running two of my own stacks and 10+ client stacks in production, but I’m holding off on a v1.0 tag until the agent steering and skills library are more battle-tested and a few more wiki sections are filled out.

Things on the near roadmap: better blue/green deploys, more notification integrations, expanding the plugin system so other folks can add registry types and database engines without forking the engine, and growing the skills library so more of the day-to-day “agent does the work” surface is covered out of the box.

If any of this resonates – whether you’ve been managing VPSes with bespoke scripts, or you’re trying to figure out how to make AI agents useful for ops work, or you just want to see how a Bash codebase scales – give it a try. The project page has the full overview, the repo is at github.com/gfargo/strut, the marketing site is strut.griffen.codes, and there’s a Discord linked from both if you’d rather chat than file an issue.

I’d love to hear what breaks for you. The 21 releases I’ve shipped so far have all started with someone hitting a wall I hadn’t thought about, and I’d rather find the next wall before you do.

Discussion

Comments are powered by Disqus. Sign in once, comment anywhere.